SUMMARY

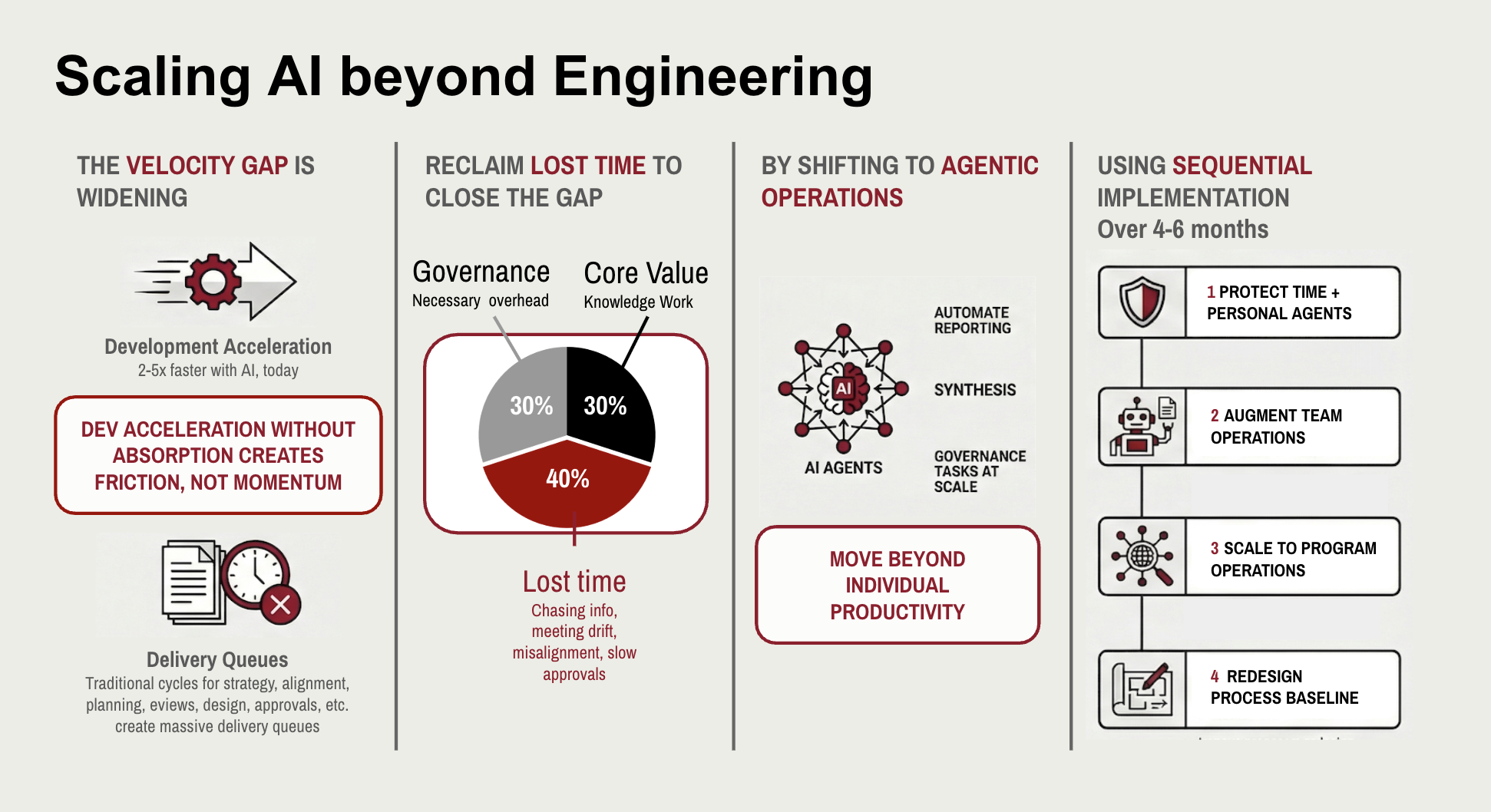

The single factor that will separate AI leaders from laggards over the next 12 to 18 months is their level of investments in agentic operations not which models they use or how many developers they've trained. It is whether they invest in AI-augmented systems that remove the organizational friction surrounding development. The tools exist today. The ROI is measurable within months. This is a no-regret move for any organization serious about capturing the full value of AI. What follows explains why—and how to start.

The System Is the Problem

You've invested in AI coding tools. Development teams are running their cycles faster, maybe significantly faster. Early signs are promising. So why doesn't it feel like your organization is moving faster?

The answer isn't in your code. It's in everything around it, and that is not a new phenomenon.

In the 1980s, W. Edwards Deming made an argument that rattled the management world: 90 to 95 percent of an organization's performance problems are caused by the system—the processes, structures, and culture—not by the individuals working within it. Talent, he argued, is rarely the constraint. The system is.

That insight is even more consequential now.

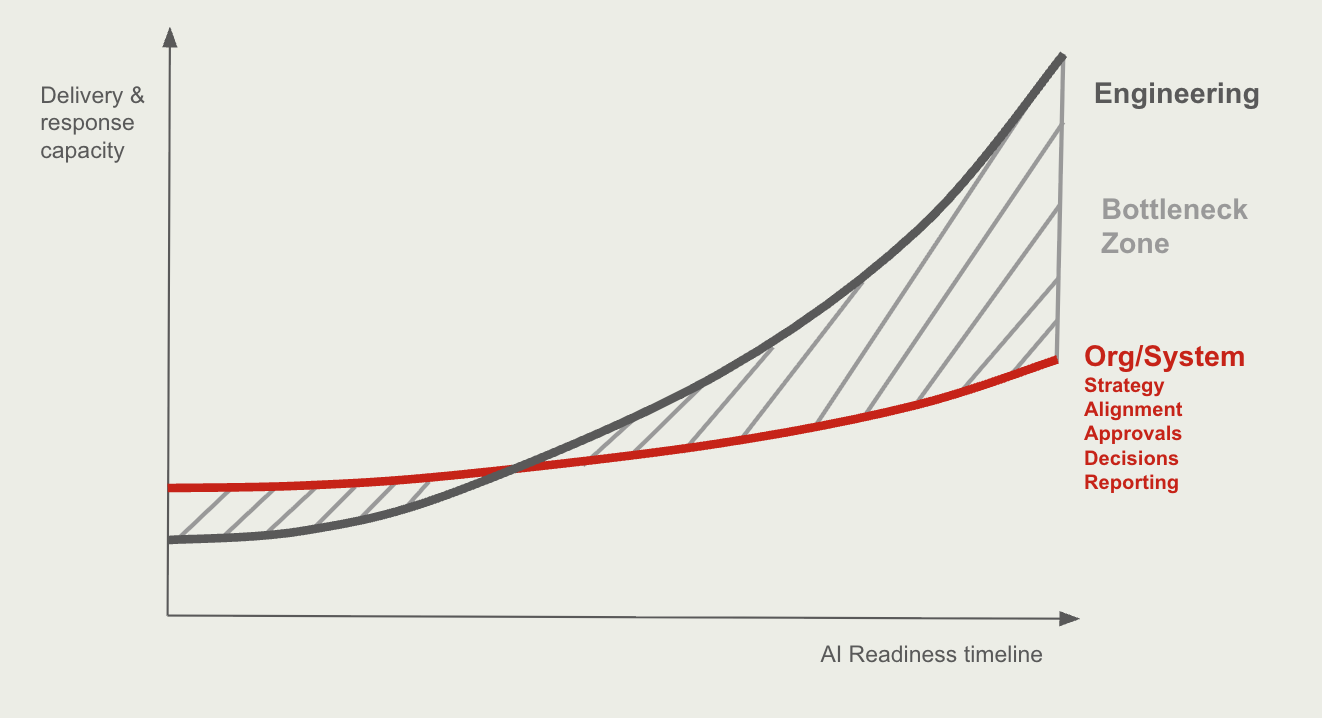

AI coding agents like Claude Code and Gemini are giving development teams a genuine velocity multiplier. Tasks that once took days now take hours. Software delivery cycles that once ran in weeks are compressing toward days. The potential to accelerate product development by 2 to 5 times is not theoretical—it is already happening in teams that have adopted agentic workflows.

But Deming's observation points to an uncomfortable corollary: if development velocity accelerates while the surrounding organizational system stays the same, you don't get 2 to 5 times the output. You get chaos.

Requirements that can't be written fast enough. Decisions that can't be made fast enough. Reviews that create queues. Approvals that create bottlenecks. Status reports that arrive stale. The gains from AI-augmented development will remain marginal until the operational system around development can absorb and respond to faster delivery.

Acceleration without absorption creates friction, not momentum.

Where Time Actually Goes

Most organizations have a working theory about where their knowledge workers spend their time. Only a minority has taken this as a signal for drastic change.

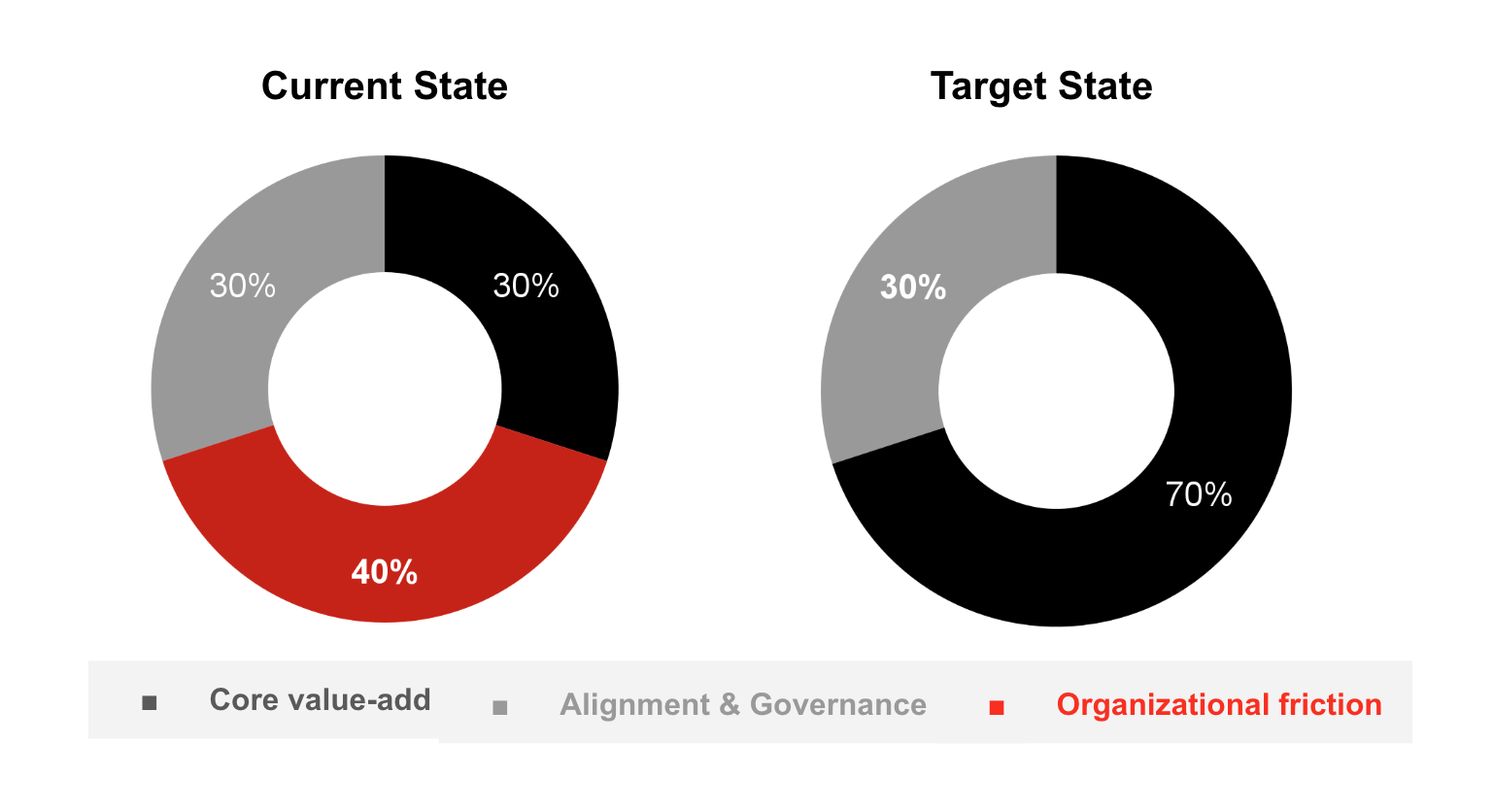

Ask any program manager, product manager, or technical lead to honestly account for a given month, and a pattern emerges: fewer than 30 percent of hours go toward the core value-creating activities their role was designed for. The other 70 percent disappears into what might be called organizational friction or the tax the system charges for operating within it.

Friction points, such as chasing information, slow approvals, unnecessary status meetings, quickly outdated reports, and resolving late-surfaced conflicts, slow progress. Context-switching among these tasks reduces valuable focused work. While some overheads, like necessary alignment and governance, are essential, they are often overshadowed and made less effective by the sheer volume of other, unnecessary friction.

The math is damaging enough today. With AI doubling or tripling development output, that math becomes untenable. A delivery pipeline that runs twice as fast will simply surface organizational friction twice as fast. Putting unsustainable pressure on the overall system.

The goal is redesigning what we spent most of our time on

The Undervalued Role of AI Agents

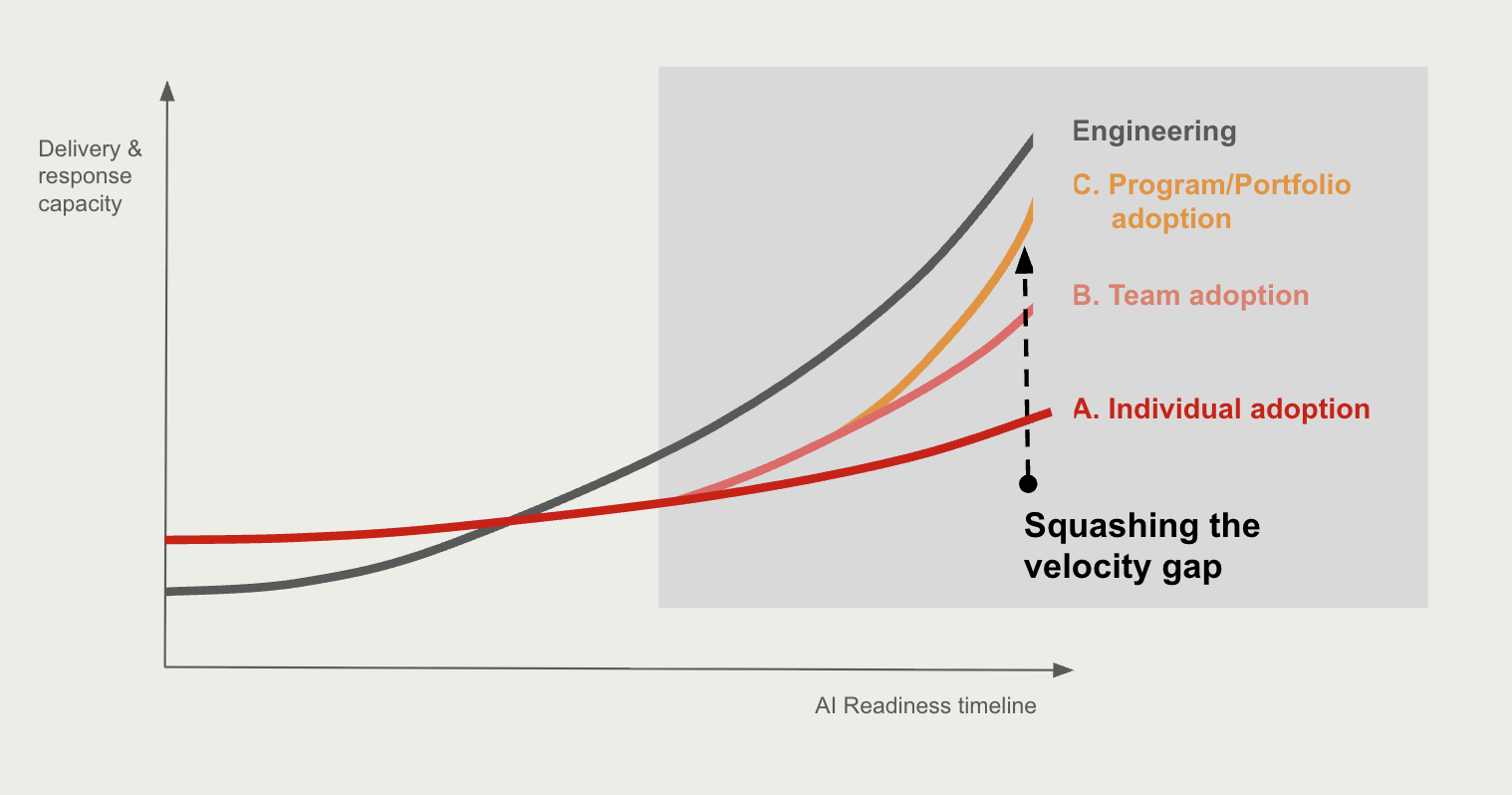

Much of the conversation about enterprise AI has focused on individual productivity—helping a developer write code faster, helping an analyst draft a summary, helping a writer brainstorm. These gains are real. They are also insufficient.

Generic AI models are capable of broad, high-quality output on individual tasks. What they are not good at is executing repetitive processes reliably at scale. Inconsistency undermines trust. And untrusted outputs require human review, which recreates the bottleneck in a different form.

This is the specific problem that AI agents solve. An agent is not a smarter chatbot. The agent can provide governance and structure to common tasks: a defined set of instructions that specifies what task runs, at what cadence, from what sources, in what order, and by what criteria. Agents do not replace human judgment—they remove the organizational friction that delays and distorts it.

The same patterns that have made AI agents valuable in software development—automated testing, continuous integration, structured code review—can be applied to the operational tasks that currently consume the majority of organizational time:

- Common reporting that is manually compiled from sources that agents can access directly

- Status synthesis across programs, surfacing misalignments before they become meetings

- Decision-support outputs that give leaders accurate, current information rather than prepared narratives

- Review pipelines that apply consistent quality checks to documents and plans the same way linters apply them to code

The ROI logic here is straightforward. Automating manual individual tasks saves meaningful time per person per week. At the individual level, four reclaimed hours per week is a significant gain. Across a team the individual gains compound and team process transformation can reclaim another two days per week per team member. Across a program team it is the difference between a quarterly planning process that consumes three weeks every quarter and one that takes one afternoon of per month.

First Implementation Steps That Work

Momentum is key. Organizations that sequence AI adoption deliberately will see compounding returns within a quarter (assuming engineers have already widely adopted AI). Organizations that attempt broad AI transformation simultaneously typically fail to generate momentum and end doing more transformation communication than acceleration.

Step 1 — Create protected time (Month 1). The first constraint is not technical. It is attentional. Every person involved needs at minimum four hours per week to design and implement operational improvements. Crucially, that time must come from the 70 percent—from friction, not from core work. Reducing a standing set of meetings for one month is sufficient to create the space. Add facilitation through short workshops. Protect the time explicitly.

Step 2 — Automate common reports (Months 1–2). The highest-leverage early targets are the manual reports that individuals and teams produce on a recurring basis: development activity summaries, risk and issue logs, sprint or cycle reviews, research digests. These are almost universally sourced from systems that agents can access directly. Automating them removes recurring cognitive overhead, reduces the requests and ad hoc meetings they generate, and forces a discipline around sources of truth. Organizations that complete this phase consistently recover four or more hours per person per week, enough to produce a positive return on the time invested within the same period.

Reports are a good starting point due to their steady frequency, high visibilty, ease of evaluation and refinement, or minimal disruption when reverting to the previous process.

Step 3 — Expand to program-level reporting (Months 2–4). Once team-level reporting is automated, the same logic applies upward: status reports to leadership, release readiness assessments, architectural adherence reviews. At this stage, reporting agents shift from being individual productivity tools to being system-level infrastructure—structured pipelines that surface cross-team misalignments, overlaps, and risks that currently appear only in meetings, if at all.

Step 4 — Redesign processes around the new baseline (Month 4 and beyond). By this point, many of the activities that once consumed the majority of organizational time have been automated or dramatically compressed. Week-long quarterly planning becomes a monthly two-hour review. Portfolio reviews that once required weeks of preparation become structured agent outputs. Designers, Architects, Product, Legal Finance, and Developers will each keep their core responsibilities but can start collaborating in in more effective ways. The focus shifts from doing operational work to orchestrating it. A materially different role, and a more valuable one.

What Leaders Should Do Now

The return on investing in operational AI augmentation is measurable and near-term. The tools required are already available. Agents that run on defined cadences, from defined sources, toward defined outputs are already proven in software development and transfer well to operational work.

The investment required is not primarily financial. It is attentional: protected time for people to redesign the work that surrounds their core work. Organizations that make that investment in the next eighteen months will not simply be more efficient. They will have built the organizational infrastructure to operate at a fundamentally different pace one that matches the velocity AI is already making possible on the development side.

The velocity gap is real. Closing it is a choice.